It was one of those accountability Twitter debates; I had deliberately managed to stay well out of it (despite being tagged in tweets) for about 48 hours; then my fingers itched, ire was raised and there I was again.

#Myth1 – Ofsted Inspectors Contextualise Schools

It’s a “no you don’t from me; you’re consistently inconsistent; it always has been and always will be thus”.

As a stranger wandering into a school for a couple of hours or days it’s impossible to fully contextualise a school and its outcomes. The information you gather is: too limited; too influenced by the first and most recent things you see; filtered through a set of personal beliefs (biases) and limited by your experiences, particularly for inspectors the experience of leading a in challenging area or a challenging school. The judgement you make and advice you give are a bit of a punt.

There is no proven framework (designed using evidence and validated) for inspectors to follow which gives a secure methodology for ensuring their qualitative judgements are contextualised; ergo, we have a problem Houston. Ofsted erroneously keep insisting they contextualise schools; their own data and lack of systemic processes would suggest otherwise.

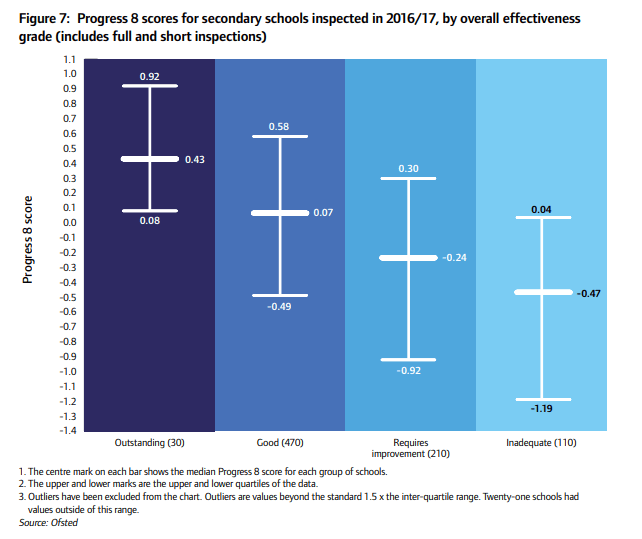

Over the decades, the schools’ accountability system has searched for the Holy Grail of a reliable and valid school effectiveness measure(s); Ofsted has flip flopped around which metric to use just like the rest of us. Whatever the Government uses in the performance tables becomes Ofsted measure of choice, until the Department for Education change their mind. As schools; many blindly follow. Ofsted are clearly interested in data and Progress 8 in particular, as the graph below shows. It’s taken from HMCI Annual Report 2016/17. Ofsted may wish to explain the variance from the median Progress 8 score as evidence of contextualisation; it could equally be explained on the basis of an inherently unreliable and variable inspection process. You can put your money on which ever one you like.

The most important thing at this point is attempting to understand what Progress 8 or any value added system is trying to do. It is an attempt to provide a measure from which a valid conclusion about a school’s effectiveness can be drawn. Progress 8 uses the difference in outcomes from that expected statistically in a pupil’s progress between Key Stage 2 Reading & Mathematics SATs scores and a range of GCSE or equivalent qualifications accredited by the Department for Education, for use in the Performance Tables. Progress for each pupil in the school is aggregated and then used as a proxy measure for school effectiveness. This is reasonable to an extent but only to an extent.

Progress 8 ignores a number of key factors that affect a pupil’s outcomes but aren’t in the direct control of the school; for example, gender, ethinicity, EAL, level of economic advantage, level of education of the parents. This weakens the use of Progress 8 as a school effectiveness measure as what is being partly/largely measured is a school’s intake. Or put another way; I think I should be allowed to open a free school for Chinese girls, from affluent backgrounds whose parents both have degrees, who entered the country in Year 6 with almost no English. Watch me smash Progress 8 out of the park whilst actually doing very little! Leading a school with a large percentage of disadvantaged white boys is statistically a career-ender.

By contrast, for the best part of a decade, when I was first appointed as a headteacher, Ofsted’s key data metric was contextualised value added. It was lauded by the government, inspectorate and schools alike as the key measure of a school’s effectiveness. Similar to the trend in the graph above (based on Progress 8 and inspection grades from 2016/17) there was a clear correlation between a school’s CVA and its inspection grade. However, if you dare mention contextualising data nowadays you can be sure someone will jump up and down, condemning you and the use of contextualised data, telling you “I won’t accept low standards for poor kids; what you are suggesting is immoral”. Except Progress 8 is partially contextualised; as with all other progress measures it is contextualised by prior attainment. That is, a pupil’s expected qualification outcomes are based on their fine point score at Key Stage 2. Pupils with a lower Key Stage 2 point score (disproportionately children from disadvantaged backgrounds) are statistically expected to get a lower Attainment 8 point score on which Progress 8 is based.

For individual pupils Progress 8 is irrelevant (Progress 8 is a school effectiveness proxy); what matters to them is attainment and whether they have the passport to the next stage of the education, training or employment they want. Attainment data is a poor measure of a school’s effectiveness. However, aggregated attainment data collected for disadvantaged pupils might be a useful proxy measure for the education’s systems contribution to social mobility. What you want to conclude affects what you should be measuring.

Some in Ofsted currently believe it’s using an “uncontextualised Progress 8” measure alongside inspectors contextualising schools. Why Ofsted wants its inspectors to contextualise a school as part of the inspection process but won’t use contextualised Progress 8 data is beyond me; it simply appears confused. The organisation has been consistently inconsistent for the past twenty five years; this sounds like a criticism of Ofsted but it’s not meant to be. They employ humans who, like the rest of us, are fallible and biased; each person is differently biased hence it depends which inspector/inspection team you get. No data is totally reliable and some measures aren’t suited to the use we have now put them to; that is, there is a reliability and more critically a validity problem.

What is now required is greater openness and honesty and acceptance of the consequential significant flaws and limitations of the whole accountability system. Once we collectively reach this point, if we ever do, then there is a very interesting debate to be had about whether our current accountability system is the best way to improve schools within the increasingly limited and scarce resources we have available; there may simply be better ways of improving education than inspecting it or holding it excessively accountable within a punitive system.

#Solution1 – Move to a multi-year contextualised value added score (outliers capped; off rolled back in) as one way of assessing whether a school is providing an effective education; agree a national attainment measure for pupils from a disadvantaged background as a way of helping evaluate education’s contribution to social mobility.

#Solution 2 – Stop grading schools as part of the inspection process; there is far too great a chance of variability, unreliability and lack of validity in the conclusions drawn.

#Solution3 – Let’s think long and hard about whether inspection is the best way to improve a school; if it isn’t what options would be better and how can we use Ofsted as a resource to serve the needs of the education system?

This is the first of a series of myth busters I believe we will be seeing in the years ahead; more myth busters from me very soon.

Great post

Vic Goddard

Principal

Passmores Academy

Lead school of Passmores Cooperative Learning Community

Posted by vicgoddard | May 1, 2018, 8:10 pmCheers Vic 👍

Posted by LeadingLearner | May 1, 2018, 8:11 pmOfsted’s “myth busters” are designed to dispel common misconceptions about their inspection processes. They aim to clarify misunderstandings and ensure transparency. It’s a helpful resource for understanding what Ofsted does and doesn’t prioritize during inspections.

Posted by shaheryarqayum19 | June 7, 2024, 5:59 amReally interesting and important analysis – raises important questions for us to grapple with!

Posted by John Wm Stephens | May 2, 2018, 5:28 pmInspection can damage schools. Inspection can also improve schools. Likewise data. We all agree we need data – and we have plenty of it! But most of us know that data mainly provokes questions, and helpful questions if your motivation is to help individual students by prioritising who to help, and what their sticking points might be. But this changes when the motivation of the interpreter (i.e. inspectors) is to evaluate – no longer is data being used to ask questions but to provide answers. And as you suggest, data is far less good at providing one ANSWER. The skill (or the bias!) is in the interpretation. How many of us in education have faith in the scientific accuracy of KS2 results and GCSE results…? We know they are not scientifically accurate, so how can they be reliably used to evaluate? I do believe that inspection can raise standards. But let’s really think about what we need to inspect, and how careful we need when we use basically rough-and-ready data for high-stakes evaluation and labelling. Thank you for your interesting post!

Posted by fieldsofthemindeducation | May 4, 2018, 11:33 pmThanks for this. Do you have a similar graphic showing inspection outcome vs pupil premium P8?

Posted by searle | June 3, 2018, 11:14 amNo. But Education Datalab will probably be able to help.

Posted by LeadingLearner | June 3, 2018, 12:17 pmOfsted’s “myth busters” are designed to dispel common misconceptions about their inspection processes. They aim to clarify misunderstandings and ensure transparency. It’s a helpful resource for understanding what Ofsted does and doesn’t prioritize during inspections.

Posted by laibashinwari74 | June 7, 2024, 5:54 amOfsted’s “myth busters” are designed to dispel common misconceptions about their inspection processes. They aim to clarify misunderstandings and ensure transparency. It’s a helpful resource for understanding what Ofsted does and doesn’t prioritize during inspections.

Posted by shaheryarqayum19 | June 7, 2024, 5:59 am